LOGS

Data Description

The LOGS event is used in SAP to view any text file from the SAP application server.

Potential Use Cases

This event could be used for the following scenarios:

Correlate batch job failures to the work process trace files.

Identify environment-wide concerns if a work process cancels.

Alert on specific error messages in the environment within the server logs.

Metric Filters

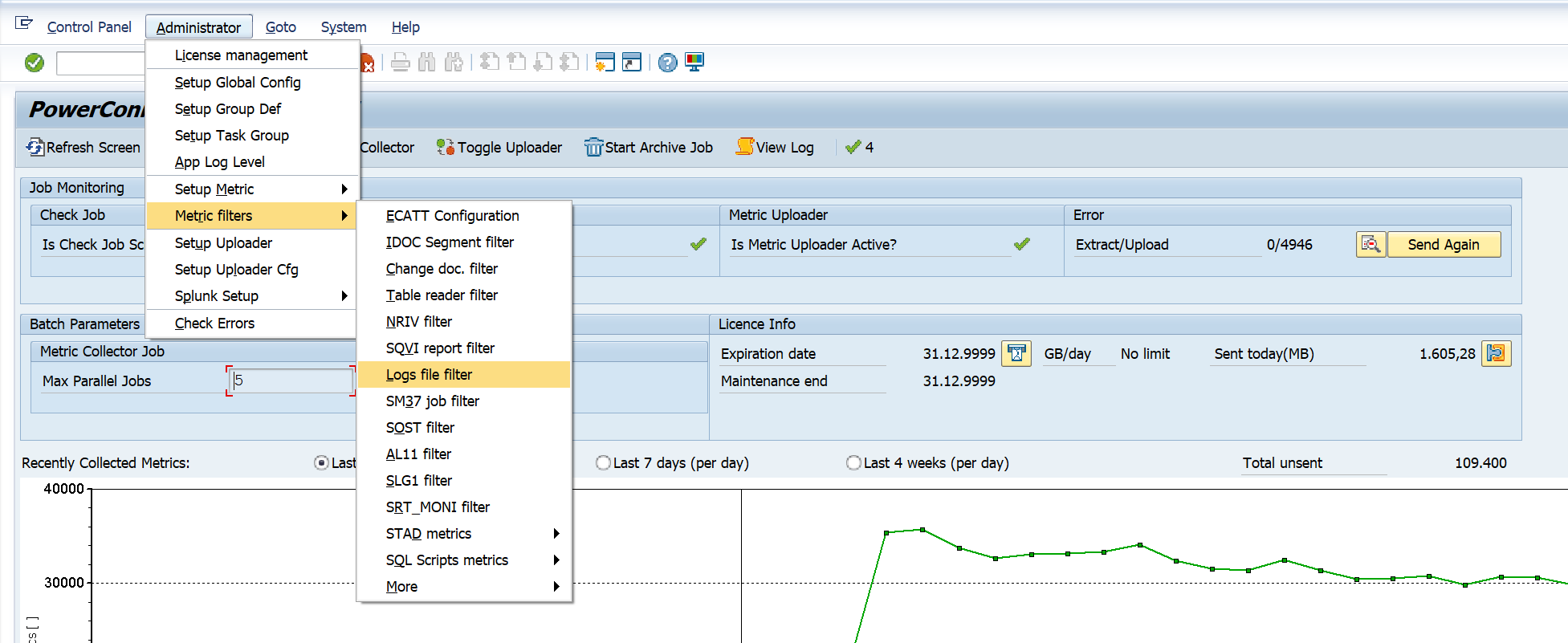

The metric filter for the LOGS extractor can be found by logging into the managed system and executing the /n/bnwvs/main transaction. Then go to Administrator → Metric Filters → Logs file filter.

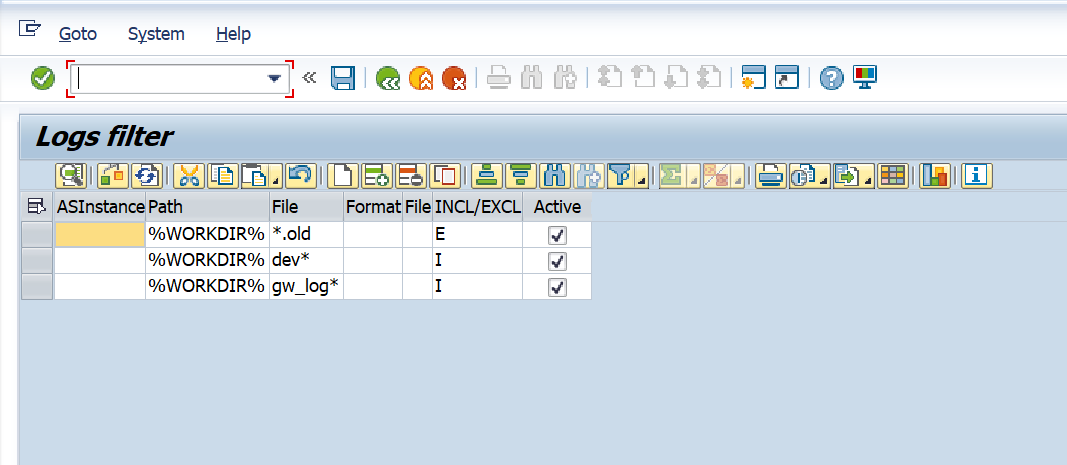

From there you will be brought to the configuration screen for the LOGS extractor.

Below are the filter options and their associated definitions.

ASInstance - This field is optional. The instance from which the data will be extracted. If you would like to extract the data from all instances leave the field empty.

Path - This field is mandatory. The file path from which the data will be extracted.

File Name - This field is mandatory, and is used to specify the file name which will be collected by the extractor.

Log Format - This field is optional. Can be populated to define HTTP log file format. Following options are available: CLF, CLFMOD, SAP, SAPSMD, SAPSMD2 (options match to predefined SAP HTTP log format options).

Logical File - This field is optional. Can be populated for security reasons to verify the path against indicated logical file name.

INCL/EXCL - This field is mandatory. The include or exclude option is used to include or exclude a value from data extraction. To include values that match the data extraction parameter please enter “I”. To exclude values that match the data extraction parameter please enter “E”.

Active - This field is mandatory. This checkbox can be used to enable and disable the data extraction. To enable the data extraction ensure that the checkbox is selected.

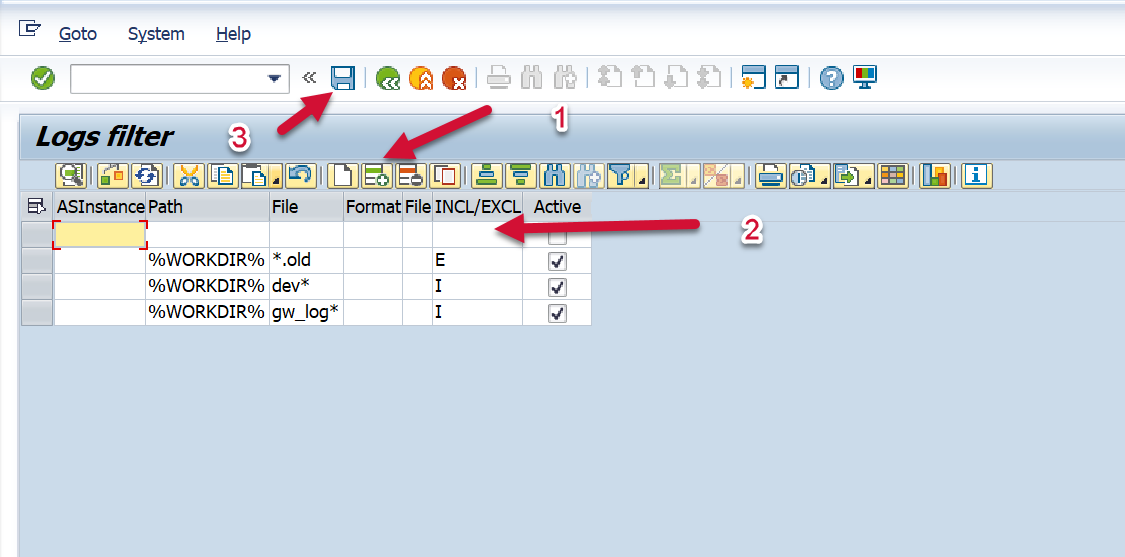

To enter a new filter value, select the add row option, and enter the values based on the options above. Save, and the data will be extracted.

Splunk Event

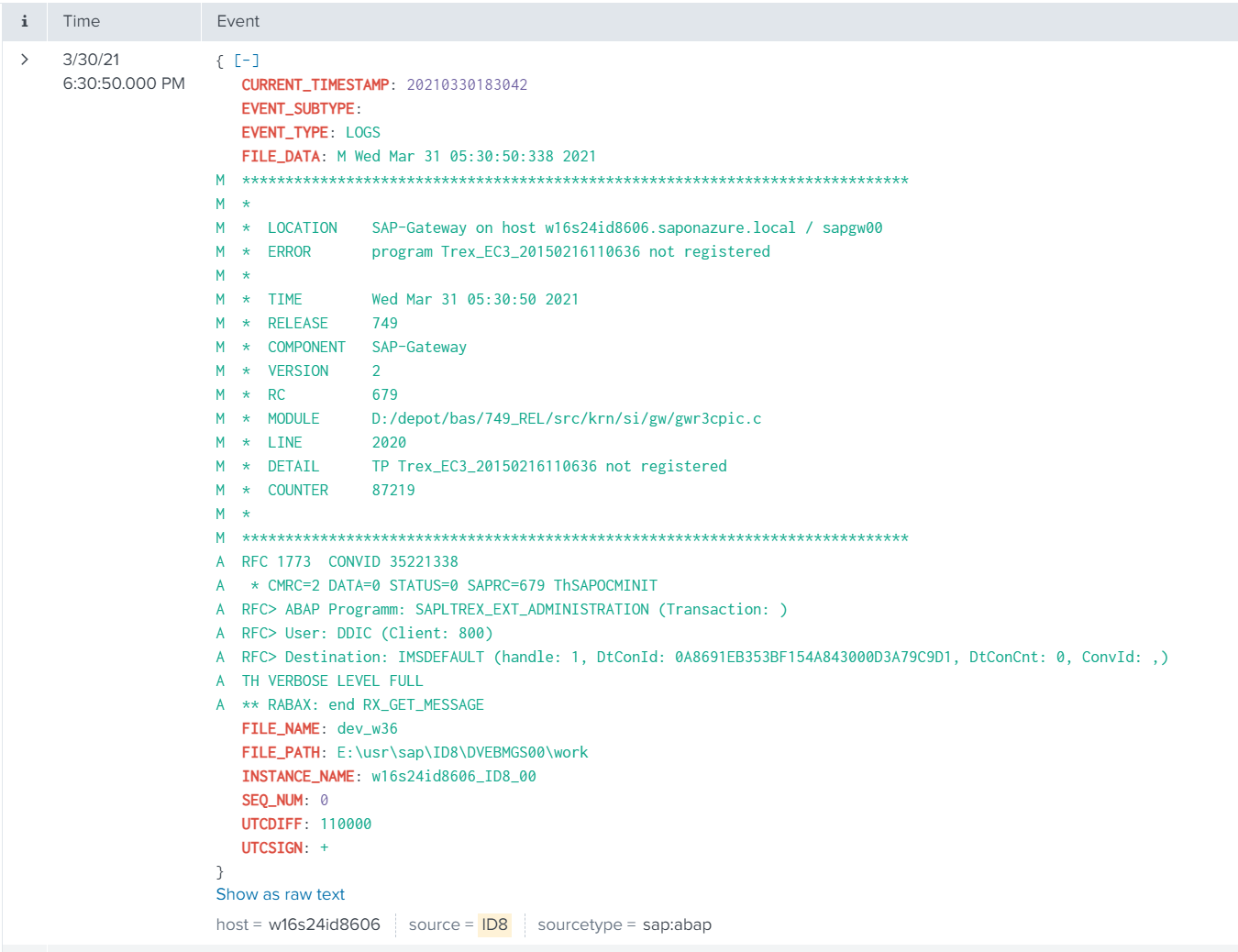

The event will look like this in Splunk:

SAP Navigation

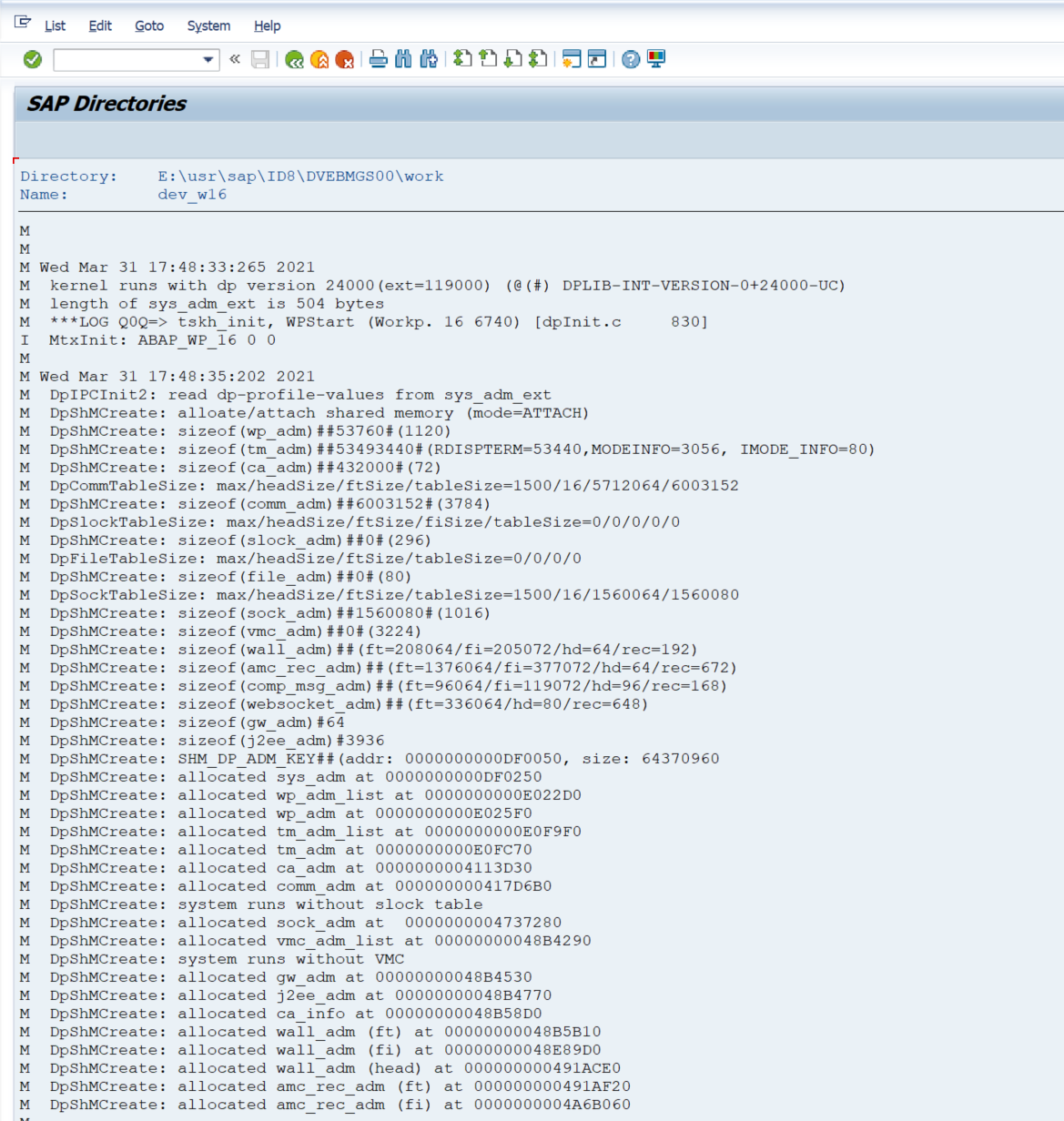

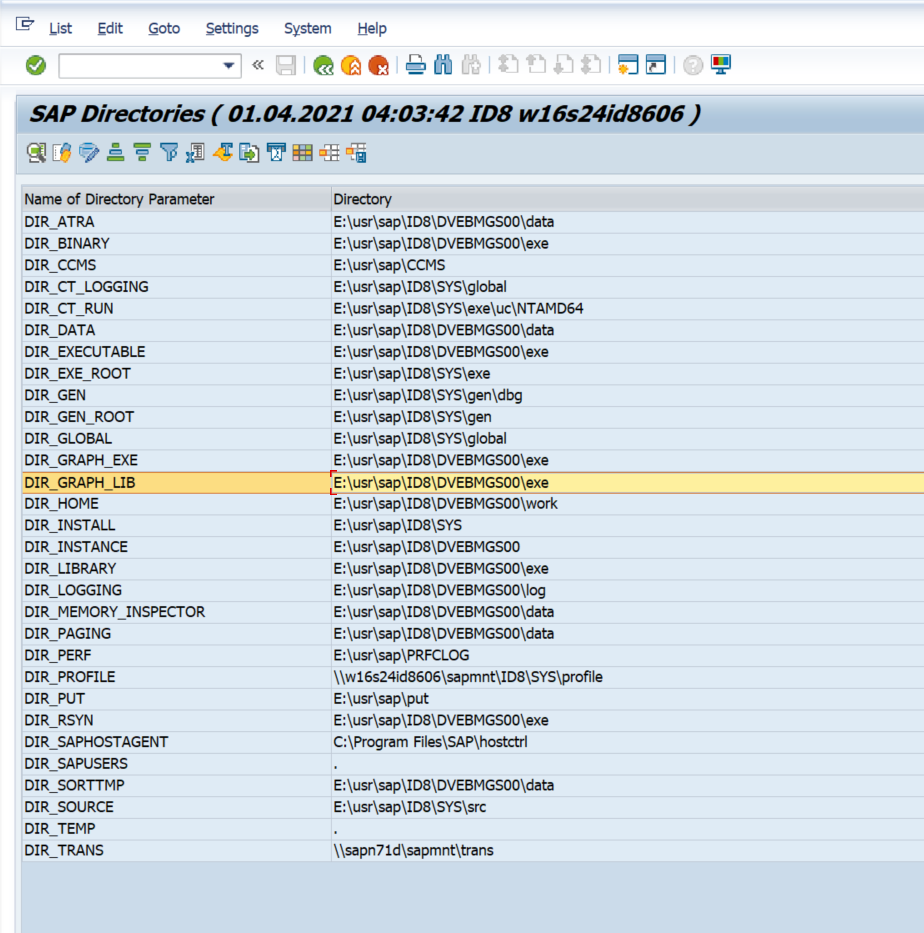

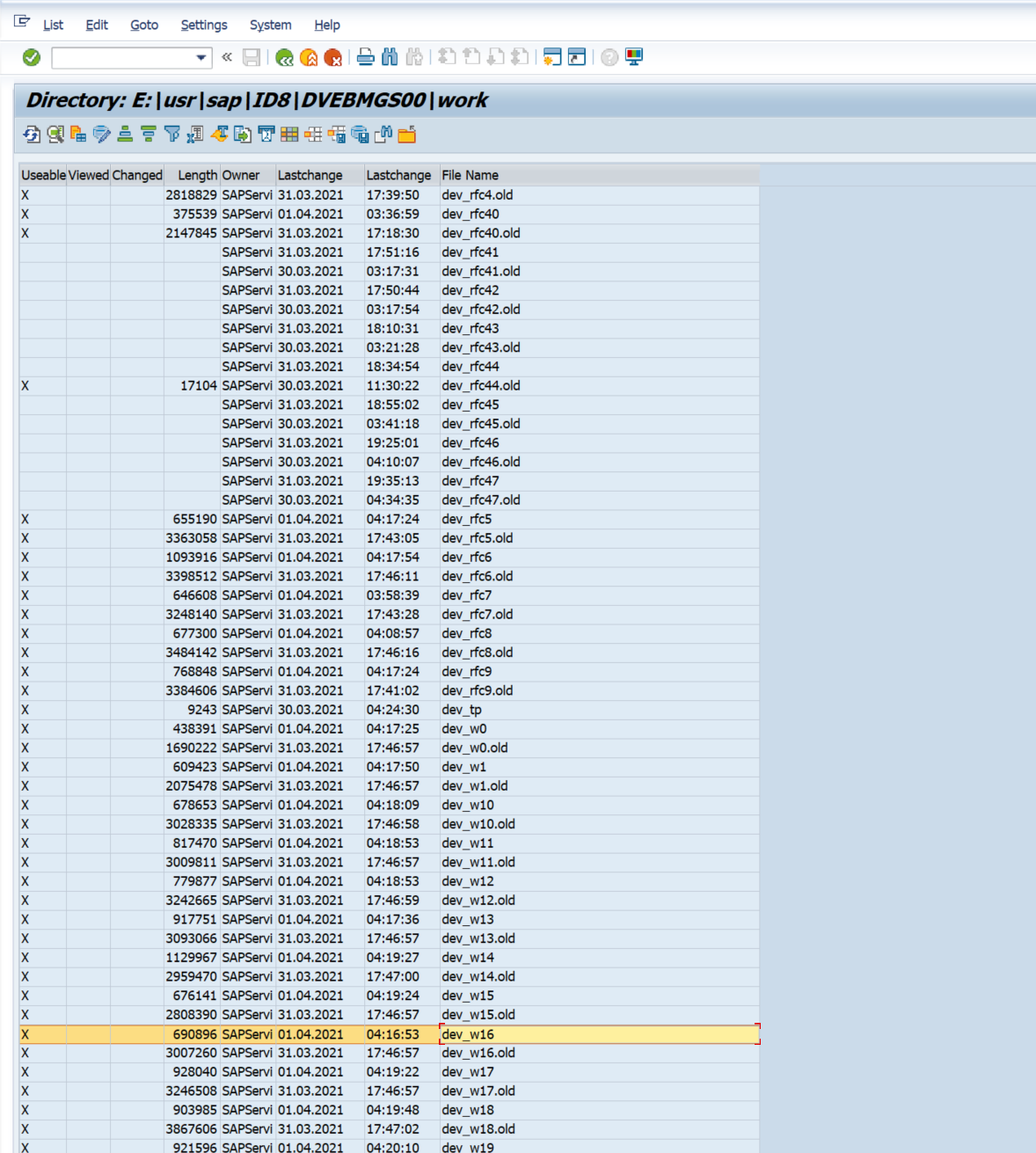

Navigate to this data by logging into the application server, and accessing the directory which has been specified in the data extraction format. Alternatively the data can be accessed by using the AL11 t-code. To access the information using the AL11 t-code, execute the transaction in the managed system. For this example we will access a file in the following directory path: (E:\usr\sap\ID8\DVEBMGS00\work). Once you are in the transaction double click on the directory path that was specified in the Metric Filters configuration.

Then double-click on the file of interest, and you will be able to view the content of the log.

The information displayed below will match the information that is passed to Splunk. Once the log file is specified in Metric Filters, the extractor is able to extract the timestamp from each line, so logs are pushed with timestamp metadata. For the rest test files, it will be text with a timestamp when the data was extracted (not the actual time, when entry is added in the log).